The LLM Problem

Large Language Models are constantly being pushed on everyone. It used to be just developers, as we were using them to do some advanced searches. It allowed us to bypass the absolute growing toxicity of Reddit and Stack Overflow; due to the increased gamification of points, Mods and try-hards were quick to dismiss dumb questions for extra clout points, and Admins of these groups were not helping, and, in a lot of cases, they were the problem in the first place.

Along came a new contender in the space in 2022: ✨ChatGPT✨. This entirely new thing was something new. We could ask it a question, and it could give us a response, in English, with something *somewhat* getting close to what we were looking for. It wasn't right by any means, and it kind of didn't understand context at all, after all, every time you put a new input into the system, it forgot what you said previously pretty quickly.

It was like magic. No more spending an hour searching for a very specific error message that was caused. You could paste in the error message and a little bit of context and it would give you a general idea. It didn't solve the issue immediately, but it got you on the right path.

It only got better with each iteration. ChatGPT 4.0 understood context more, and they started keeping it up-to-date with the latest data. Eventually, they let it loose on scouring the web for information. GPT-4o made it even better, where it was almost like it knew what problems you were going through for your development journey. It started understanding context more. Memory got better.

Then... it just stopped. We hit the plateau. They released ChatGPT 4.5, and it wasn't any better. In fact, it was worse. It was like they had kneecapped the very foundation of the LLM through requirements to hold it back in certain ways. I'm not saying it wasn't required. After all, ChatGPT has been blamed for the suicide of impressionable young adults. ChatGPT was so rapidly that not even the quick-to-act corporate movie lobby could move quickly enough to take down this massive breach of IP rights, and, by time groups like Disney and Universal waded into the mix, it was already too late: groups like Midjourney had already taken over the zeitgeist of collective thought amongst those that were too lazy, and, even then, IP Lawsuits can take years to resolve. Meanwhile, we're seeing movements in AI that are measured in hours.

Now, LLMs have had their purpose. I do use Gemini to do some searches, and to bring context to a lot of my problems with coding. In fact, I have a notice on exactly how I use AI in my programming, and photography workflows. I've had to do that so people understand that, when they work with me, I know what Generative Models are capable of, but I do set limits in my own workflows not just on the principle of the situation, but also because... well, they are just... shit.

See, the biggest problem that I have with Generative models is that there is no humanity to them. They take things so literally every time, and they will try to be more helpful than they need to be. If you ask them to do just one thing, they will do the thing, and then they will do the next thing, and then the next thing, and then the next thing. It doesn't matter if the problem still exists on Step 1, nope, they are going to give you Steps 2 through 10 immediately. Meanwhile, people like Slopya Nadella just foaming at the mouth to push Copilot on everything. It doesn't matter that these features create security holes. Nope, YOU MUST USE OUR MODEL.

{Its actually kinda funny, this entire LLM Slop Bullshit has created an entire open community around combating the slop in some kind of weird shitty version of Viscera Cleanup Detail in the Open Source Community.}

Of course, we have those saying "oh, well, you can just turn it off." Except we shouldn't need to turn it off. It should default to not installed. If we want to have the latest Slop Model installed on our PC, we will ask for it to be installed. For 100% of PC users, we have been around since before this LLM Slop existed. After all, this LLM slop only started at the end of 2024, which means, as of writing, LLM Slop bullshit is currently 4 years old, and that is only ChatGPT. More than likely, if you can type on a PC, you are at least 4 years old. Although I started coding when I was 5 years old, I didn't at least know how to understand what a PC was and its implications until I was at least 3, and that was because my father was a systems engineer. This stuff has been happening so rapidly that people haven't realized that we have been working overtime for only 4 years on the LLM War. I know, it seems like forever ago, but, really, this entire LLM Slop BS is very young.

So, why is this an issue? Why is it that we have this issue that we have these LLMs? Well, I'm not going to get into the Water or Power requirements that are basically destroying our economy. I'll leave that to another blog post. What I'm going to get into is the perception of things.

Well, the perception of AI is that it is lazy, and that it actually doesn't do what you ask for... almost ever. The left example is a prime example of why generative models just will never amount to anything: it doesn't understand. More than likely, this picture on the left, they asked for a picture of a hand. Now, as a general rule, not only do we generally not take pictures of just our hands, but we also don't have a repository of clearly labelled hands. For the longest time, the way you could tell every Midjourney image was just look for 4 or 6 fingers on a hand, or a hand with no thumb and all fingers. You still can do this in some images because Midjourney doesn't understand what a hand is.

It doesn't understand that a hand has 27 bones.

It doesn't understand that ligaments connect muscle to bone.

It doesn't understand that blood vessels keep the hand supplied with oxygen and nutrients, and take away carbon and waste products.

It doesn't understand that a hand bends at the spaces between bones.

It doesn't understand that humans (generally) have opposable thumbs for grabbing.

It doesn't understand that our skin protects the muscles, bones, and blood vessels inside.

It doesn't even understand that a finger can't push into another finger because that would break the laws of physics.

What it understands is "generally, when this group of pixels exists, this pixel is more than likely to have this color." This repeats over and over again until it gets satisfied that every pixel is "the closest it can get to 100% that pixel colors are true." The reason why it builds four or six fingers is because its not counting the number of fingers, its seeing that a group of pixels have an outline of a finger, so there's another finger next to it. That's generally what happens. It isn't until you get to the edge of the image or you start getting into that the mass of fingers pushes into other pixels that it knows aren't supposed to be next to a finger that it decides "Nah, that's enough." It can't understand when to stop fingers because our fingers, generally, they look the same, just have different lengths to them.

This entire thing is a good illustrative point on why Generative Models like LLMs have limitations, and are not our end-all-be-all end result that these LLM Pushers are shovelling into our gullets against our will. Now, I'm not entirely against the use of Generative Models. Again, I have an AI Notice that tells you exactly how I use Algorithmic, Adaptive, LLM, and Generative Models. I use Gemini to help me search for things in my coding adventures (seriously, setting up Docker images is confusing, no matter how many times I've done it). However, these models are...

🔨⛏️ TOOLS 🔦✂️

It seems like every CEO that has been trained at McKinsey and Co. is just frothing at the mouth to erase thousands of jobs. Here's the thing, it will backfire... spectacularly. We're already seeing it:

- 95% of AI Startups fail to produce any result.

- Air Canada was held liable for giving incorrect information to a traveler through an AI Chatbot.

- A new report is showing the layoffs are backfiring and these companies are now required to rehire workers.

Here's the thing, this backfire is going to cost these companies' short-sighted CEOs dearly. They fired really good workers that know how the systems work. Now, when it comes back to begging them to come back or the company will collapse, these workers will have the leverage. These corporations could have kept these workers on, and used Generative Models as a supplement. They would have probably given a 5-8% raise just like normal. However, with the issue that they fired them, they probably got other jobs, and they can now demand a steep rehiring clause. Instead of paying an extra 5-8% for the same worker, they are probably going to demand a 40-90% pay increase to compensate them for their need, loss of salary due to the CEO's stupidity, and general overall increase in the global cost of living (mostly caused) due to these Generative Slop Machines.

Unfortunately, we're facing a brick wall that we're hurtling towards at supersonic speeds. These companies have spent 50-11,000% of their annual revenue committing to AI Buildouts, AI Investments, and Spend Commitments. All of this has been saddled with huge amounts of debt, and circular investment. Money has been shoveled by the truckloads into the Generative Model Money Furnace, and none of these companies have reported any profits arising from the use of Generative Models. They've reported billions, even trillions, in investments, but there are ZERO companies reporting profits. Heck, even nVidia, which has been at the center of this Slop-boom, reported a bunch of profits, but that completely discounts all of the circular spending commitments that they kind of leave out of their quarterly reports that immediately put them in the red.

So, what are we going to see, and what can we do?

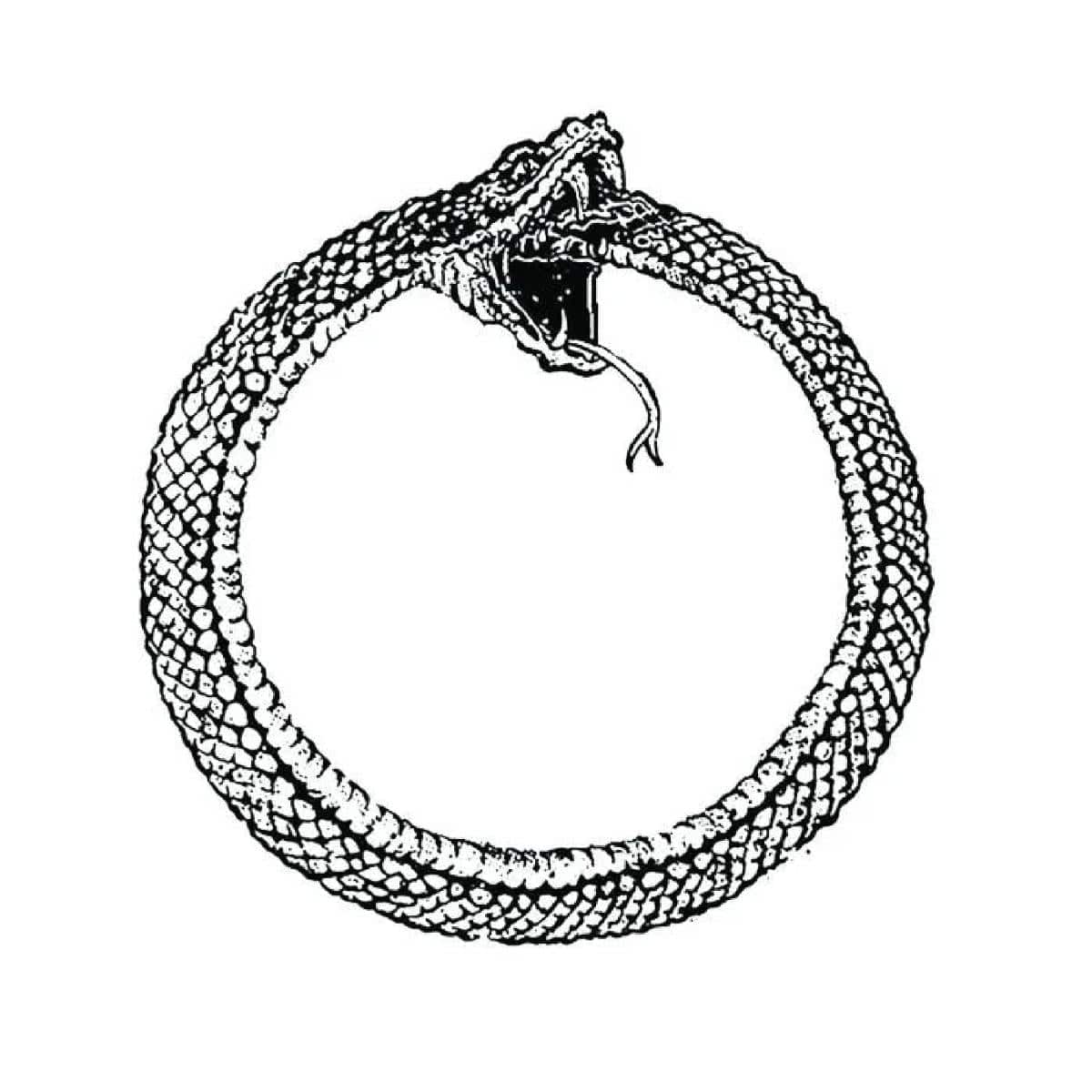

Well, in terms of the future, you can expect that there will be, in the short term (being 1-12 months), we're still going to see layoffs. However, there is going to be a shift. The layoffs, citing "AI" as a reason to cut headcount, is going to dry up. Companies will quietly start raising their headcount, trying to cover their ass for all of the shit their company isn't able to accomplish now because they fired the good people. Quarterly reports will stop mentioning Generative models and "look to the future." Then, when the hiring roars back, people will notice that the flow of jobs has reversed, and every headhunter will be foaming at the mouth to secure jobs for people with high pay. Then, you'll see a major player drop something major: they are scaling back/cancelling their AI Deployment. Its going to send a tsunami through the stock market. The layoffs will resume, citing AI, but not because AI is making jobs easier. No, the layoffs will be in the AI Departments themselves. Thousands of vibe coders will be dropped as the Ouroboros comes to eat its own tail. Entire data centers will shut off over a short period of time. AI will wimper out faster than it took to build up. Then, the other side of the Tsunami will come: the banks will now be holding trillions in AI Investment loans with these corporations that severely overspent on their AI Buildouts. They have to collect, but, with this collection, it will come with that these companies will need to close up shop.

So, what can we do? Well, we can demand that our representatives pass legislation that holds these C-Suite Executives personally liable for making these bad investment and loan decisions. No longer should C-Suite Executives be able to hide behind the corporate veil when their actions lead to the collapse of the economy. The reason why the Dot Com bubble happened, and then the 2008 Financial Crisis happened is because these C-Suite Executives are allowed to make these massive decisions that affect large swaths of our economy, and when the bubble bursts, they just retire on their golden parachutes. It should be treated as a high felony to do as much damage as these executives are racking up on these endless AI Credit Cards. If I racked up a million dollars on a credit card, and then when I couldn't pay back the bank for that payment, the credit companies can ruin my life, permanently. We should treat these C-Suite executives with the exact same, and comparable punishment. Perhaps then, will we actually be free of the snake oil C-Suite Executive "just another million dollars in investment, bro" endless cycle.